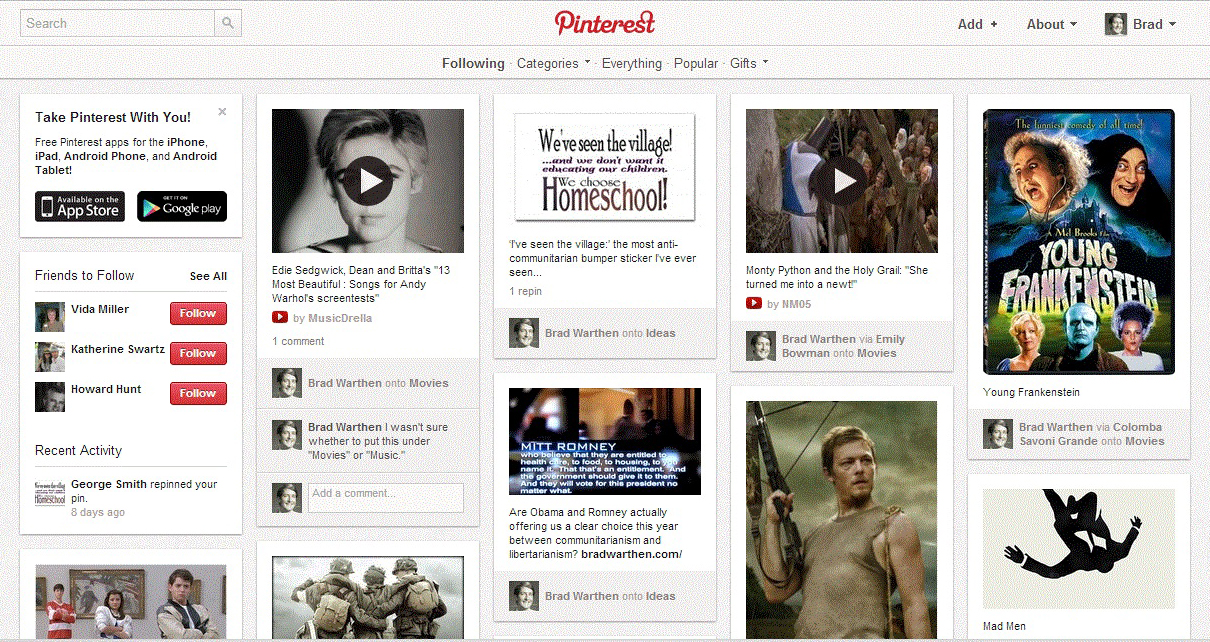

Back on this post from yesterday, we were having the usual argument about the intrusiveness of private companies vs. the government, and as usual someone said “my use of Google Maps is voluntary,” an assertion which I questioned.

My use of Google Maps and other Google products is no longer in the realm of what I consider to be “voluntary.”

Google is as much a part of the daily infrastructure of my life, and the things I need to get done, as the streets I drive on. Its services are something I rely on, in a more direct, frequent and ubiquitous manner, than I do the direct services of the police.

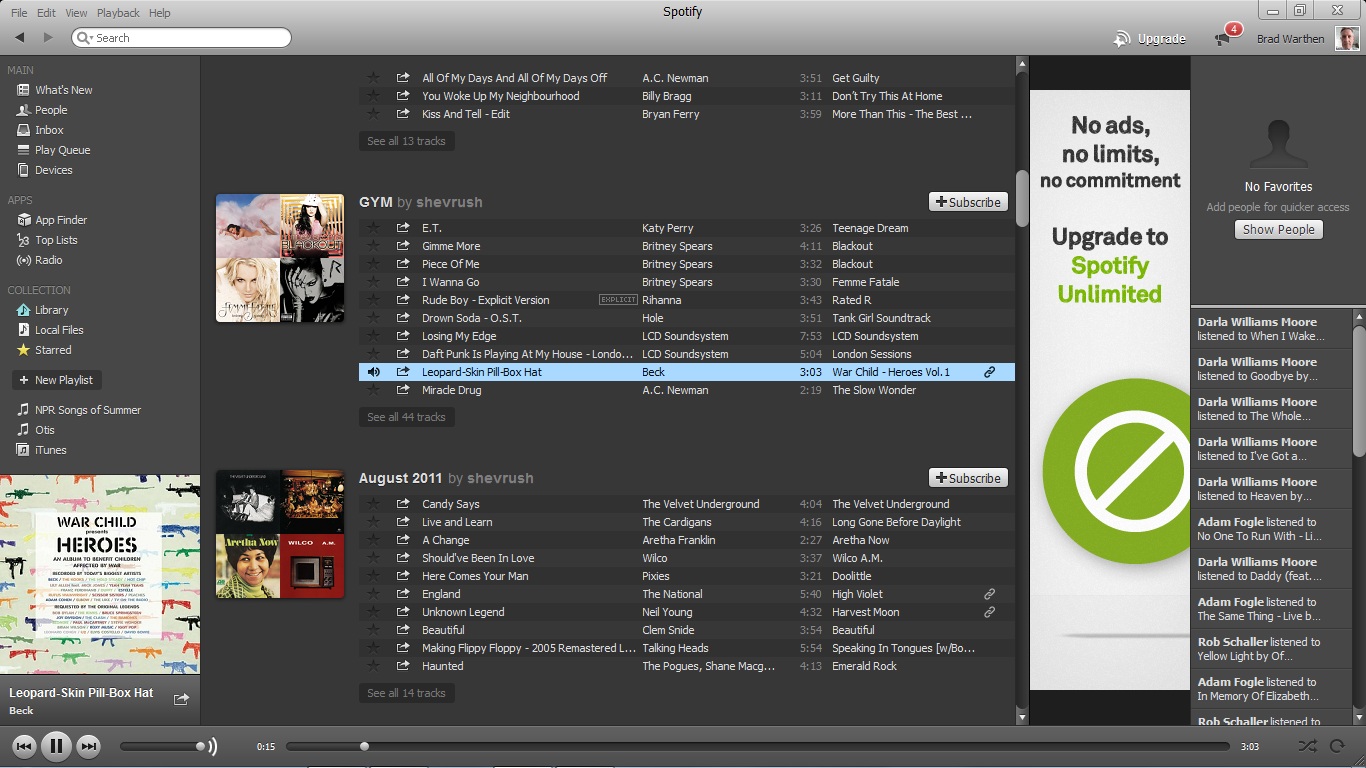

I don’t see how to engage modern life without it — or something exactly like it. I couldn’t get through a day of ADCO work without it, much less publish this blog. Without Google, both of my active email accounts go away, my browser (the instantaneous searches that occur when you type into the URL field, making it unnecessary to know the address of anything, is indispensable) disappears; there’s no YouTube, no really utilitarian Maps program, and then all sorts of other useful things like Google Books, Translate (no longer can I just say, Well, that’s French and I don’t understand French… no excuse), etc. Without Google Images, I have to fall back on my highly flawed memory for names and faces.

One can attempt to drop off the grid and no longer use Google, just as one can drop out of society at large — quit paying one’s taxes, go live in the wilderness off the land. Theoretically, at least.

But the cost of doing either is pretty high…

Yes, there are other services that do these things. But that’s not the point. If Yahoo or AOL had succeeded in being what Google is, or if Facebook were to succeed in being what it wants to be, then it would be the same thing; we’d just be calling it something different. And why ever use competing services for any of these functions, when the very fact that they are all knit together seamlessly magnifies their utility exponentially? I would no more want to switch platforms than I would want to try to leave the roads and drive on a railroad track in my car.

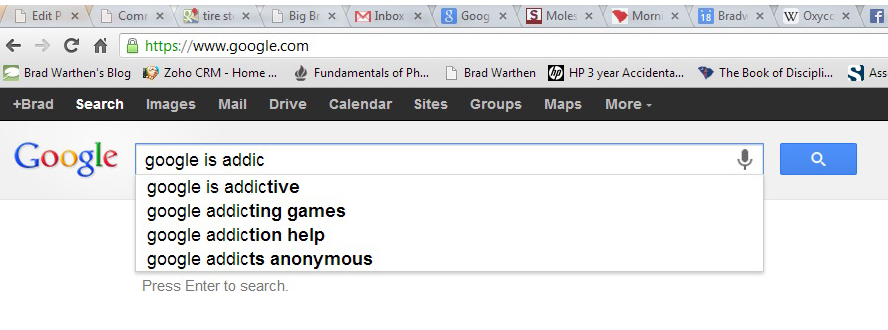

Kathryn writes, “Google is a gateway drug.”

Yes. And more addictive than most.

I always had trouble with being distracted by looking things up. It was just too seductive. A dictionary on my desk was a dangerous thing. I couldn’t look up a word without running across several other words on the way that fascinated me, and each of them led to other words, and on and on.

Fortunately, I had a good vocabulary, and seldom really needed to look up a word.

But now that I can, instantly, look up anything, I cannot stop doing it. A thought about a word or a fact that causes my brain to wonder or doubt even slightly (something I have always done, constantly; it’s just that for the first decades of my life it was harder to scratch that itch) sends me on an immediate search.

For instance, last night I watched “Looper.” Almost immediately, I wondered who the protagonist was. It looked remotely like Joseph Gordon-Levitt, but the expression and even facial structure was wrong (It was him, but he wore extensive makeup to make himself look like a young Bruce Willis). Then I thought, “Isn’t Bruce Willis in this? Why haven’t I seen him?” So I checked, and yeah, he was coming up. I see Emily Blunt’s in it. Isn’t she the girl who… ? Yes, she is. She’s really something. Jeff Daniels is surprisingly good in this. What’s his character’s name again? And so forth… (By the way, the movie wasn’t very satisfying.)

OK, so most of that was IMDB, and IMDB isn’t Google. Yet. But the fact is, I often use Google to flesh out what I find in the movie database, because the info there is pretty sketchy. I like depth in my trivia. I used to do this with my phone, which is always clipped to my belt. Now, I usually have the iPad within reach as well.

In any case, now that it’s possible to look things up constantly, I can’t stop.

You can point to this as a character flaw (or perhaps an illness), and you have a good argument. But aside from the compulsive aspect, a certain amount of this is necessary to practically everything I do, everywhere I go.

Let’s say that a person only really needs to use these services a tenth as much as I do. I could concede that. But if a person doesn’t at least use them that tenth amount, he’s not going to be able to keep pace with the world and interact with other people at the pace that society demands — at least, not in anything I’ve ever done for a living. (Yes, I know that lots and lots of jobs today are still not information-based.)

That puts Google into the realm of essential infrastructure, again like the roads that are a function of government.

It at least gets us to where any assertion that one is not forced to deal with Google (or, for the sake of argument, with some other “private” entity that’s just as useful) on fairly thin ice.